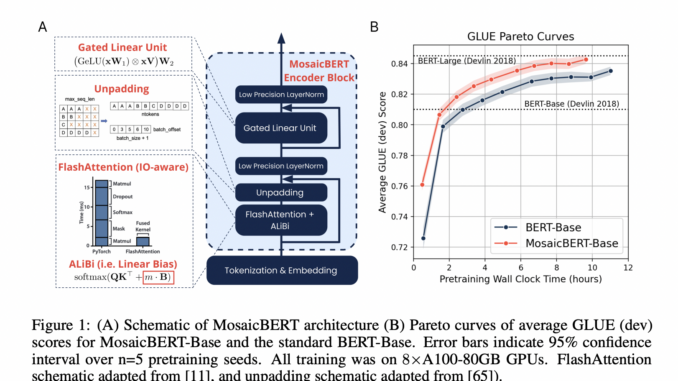

Meet MosaicBERT: A BERT-Style Encoder Architecture and Training Recipe that is Empirically Optimized for Fast Pretraining

[ad_1] BERT is a language model which was released by Google in 2018. It is based on the transformer architecture and is known for its significant improvement over previous state-of-the-art models. As such, it has […]